Energy Efficient Artificial Intelligence

Artificial intelligence has become a focus of certain ethical concerns, but it also has some major sustainability issues. MIT researchers have developed a new automated AI system for training and running certain neural networks. Results indicate that, by improving the computational efficiency of the system in some ways, the system can cut down the pounds of carbon emissions involved — in some cases, down to low triple digits.

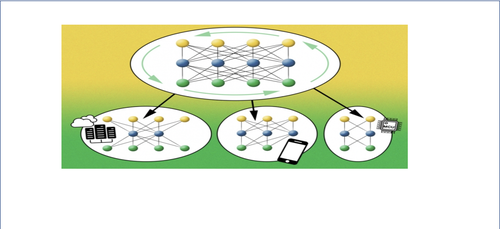

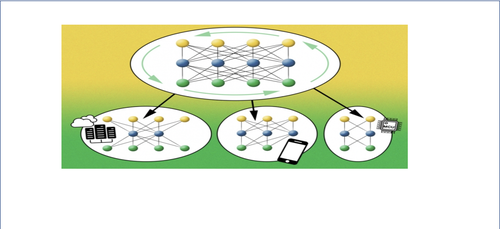

The researchers’ system, which they call a once-for-all network, trains one large neural network comprising many pretrained subnetworks of different sizes that can be tailored to diverse hardware platforms without retraining. This dramatically reduces the energy usually required to train each specialized neural network for new platforms — which can include billions of internet of things (IoT) devices. Using the system to train a computer-vision model, they estimated that the process required roughly 1/1,300 the carbon emissions compared to today’s state-of-the-art neural architecture search approaches, while reducing the inference time by 1.5-2.6 times.

“The aim is smaller, greener neural networks,” says Song Han, an assistant professor in the Department of Electrical Engineering and Computer Science. “Searching efficient neural network architectures has until now had a huge carbon footprint. But we reduced that footprint by orders of magnitude with these new methods.”

The researchers built the system on a recent AI advance called AutoML (for automatic machine learning), which eliminates manual network design. The researchers invented an AutoML system that trains only a single, large “once-for-all” (OFA) network that serves as a “mother” network, nesting an extremely high number of subnetworks that are sparsely activated from the mother network. OFA shares all its learned weights with all subnetworks — meaning they come essentially pretrained. Thus, each subnetwork can operate independently at inference time without retraining.

“If rapid progress in AI is to continue, we need to reduce its environmental impact,” says John Cohn, an IBM fellow and member of the MIT-IBM Watson AI Lab. “The upside of developing methods to make AI models smaller and more efficient is that the models may also perform better.”

For more, please click here.