How People Make Sense of Information

As a student in the MIT Laboratory for Information and Decision Systems (LIDS) and in the MIT Institute for Data, Systems, and Society (IDSS), Manon Revel has been investigating how advertising in online publications affects trust in journalism. The topic area is familiar for Revel; growing up, she wanted to be a journalist to follow in her father's footsteps. As a teenager, Revel was assigned her first reporting gig covering a holiday fireworks display for the local radio station near her home on the Atlantic coast of France. Revel researched every one of the dozens of types of fireworks that would be launched and spent hours interviewing the person who would launch them before reporting live on the night. Revel continued reporting for radio and print (she started a high school newspaper that is still active), using the same thorough approach to prepare for each interview. “I wanted to ask interesting people questions that they had never been asked before, so I read everything I could on them beforehand and then I tried to learn something new,” Revel says.

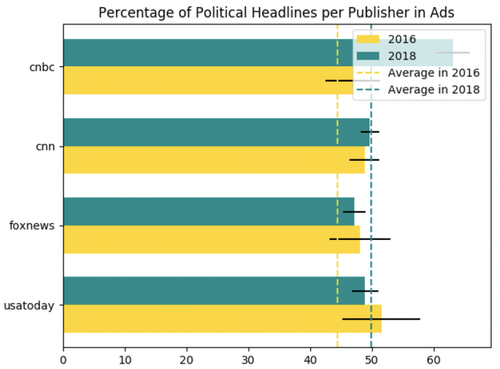

After the 2016 United States presidential election, Revel took a deep dive into journalism from an analytical perspective. Current events had underscored the importance of understanding how information is, for better and worse, disseminated in the digital era and made sense of by the people receiving it. Revel’s main project, together with a fellow graduate student in LIDS, Amir Tohidi, has been investigating the effect of “clickbait” ads on reader trust. As publications have struggled with online revenue models, many have come to increasingly rely on “native ads” that are designed to blend in with news stories and are often supplied by a third-party network. The majority of these ads are clickbait, which Revel and her colleagues define as “something (such as a headline) designed to make readers want to click on a hyperlink, especially when the link leads to content of dubious value or interest.” Revel wanted to quantify exactly how prevalent clickbait is, so she set up a Bayesian text classification program to recognize it. The AI was trained off of two testing sets labelled by users on Amazon’s Mechanical Turk service. Then, Revel scraped over 1.4 million ads from the time period 2016-19, and, using her text classification program, found that more than 80 percent were clickbait. Next, Revel ran two large-scale randomized experiments, which showed that one-time exposure to clickbait near an article could negatively impact readers’ trust in the article and publication. That effect was driven by medium-familiarity publications — defined as outlets recognized by 25-50 percent of the audience. On the contrary, the ads' effect on the most well-known outlets, like CNN and Fox News, was found to be null.

Clickbait among house ads (from Revel’s MS thesis titled with “The Effects of Native Advertisement on the US News Industry”)

Revel hopes that the project will raise awareness among journalism publications of the risk of losing readership in the long term in order to reap the short-term financial rewards of native advertising. She acknowledges that there’s not an easy solution, as publishers may not be able to afford losing the revenue. However, reader trust in high-quality journalism is a crucial issue in a time of rampant “information disorder,” which is the umbrella term that researchers like Revel use to describe the combination of intentionally or maliciously false information, unwittingly shared misinformation, and true but harmful information that spreads like wildfire through the internet.

For more detail, please click here.