Improving Smartphone Security via Artificial Intelligence

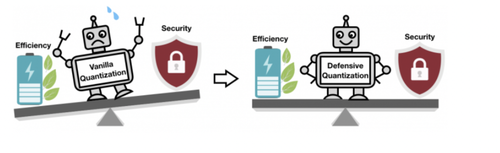

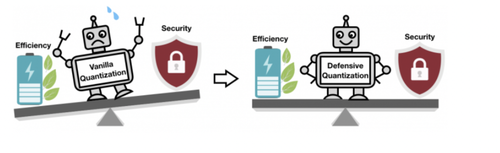

Smartphones, security cameras, and speakers are just a few of the devices that will soon be running more artificial intelligence software to speed up image- and speech-processing tasks. A compression technique known as quantization is smoothing the way by making deep learning models smaller to reduce computation and energy costs. But smaller models make it easier for malicious attackers to trick an AI system into misbehaving. When a deep learning model is reduced from the standard 32 bits to a lower bit length, it is more likely to misclassify altered images due to an error amplification effect: The manipulated image becomes more distorted with each extra layer of processing. By the end, the model is more likely to mistake a bird for a cat, for example, or a frog for a deer.

In a new study, MIT and IBM researchers offer a fix: add a mathematical constraint during the quantization process. That is, controlling the Lipschitz constraint during quantization restores some resilience. “With proper quantization, we can limit the error.” says Dr. Song Han, an assistant professor in MIT’s Department of Electrical Engineering and Computer Science and a member of MIT’s Microsystems Technology Laboratories. The team plans to further improve the technique by training it on larger datasets and applying it to a wider range of models.

In making AI models smaller so that they run faster and use less energy, Han is using AI itself to push the limits of model compression technology. In related recent work, Han and his colleagues show how reinforcement learning can be used to automatically find the smallest bit length for each layer in a quantized model based on how quickly the device running the model can process images. This flexible bit width approach reduces latency and energy use by as much as 200 percent compared to a fixed, 8-bit model. The researchers will present their results at the International Conference on Learning Representations (ICLR) in May and at the Computer Vision and Pattern Recognition (CVPR) conference in June.

For more, please click this.