Why Nonlinear Activation in CNN?

Convolutional neural networks (CNNs) have received a lot of attention in recent years due to their superior performance in computer vision benchmarking datasets. Yet, little theory has been developed to explain the underlying principle of CNNs.

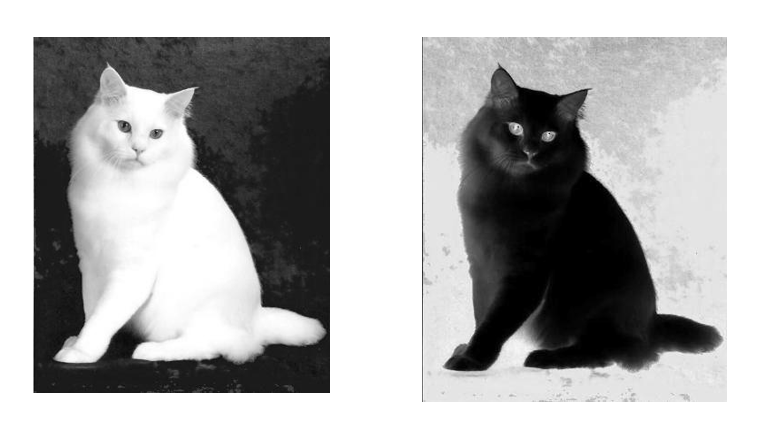

Dr. C.-C. Jay Kuo (LIDS/MIT, PhD, 1987), Distinguished Professor of EECS at the University of Southern California, proposed a mathematical model called the “REctified-COrrelations on a Sphere” (RECOS) to explain the need of nonlinear activation (e.g., Sigmoid, ReLU and Leaky ReLU) at the output of each layer. He said, “Nonlinear activation is used to resolve the sign confusion problem existing in multi-layer cascaded networks”. As an example, if there were no nonlinear activation, the network would not be able to distinguish the two cats shown in the companion photo after two layers due to sign confusion.

His single-authored paper entitled with “Understanding convolutional neural networks with a mathematical model,” in the Journal of Visual Communications and Image Representation (November 2016) received the 2018 Best Paper Award from the Journal.